Five years ago, your Postgres handled everything. Users, orders, a handful of dashboard queries. The database sat comfortably behind your application, and the hardest operational question was whether to bump shared_buffers or add a read replica.

That era is over. Today, AI agents generate thousands of embedding lookups per user session. Observability pipelines push 100K events per second. Product teams want real-time analytics on user behavior, not yesterday's batch report. The shape of the data, the velocity of the writes, and the concurrency of the queries have all changed at the same time.

The traditional response to this pressure is to split: Postgres for transactions, a warehouse for analytics, ETL pipelines in between. But that response was designed for a slower era. It introduces cost, latency, and operational complexity that modern teams cannot absorb.

What is emerging instead is a unified stack. A Postgres that is fast on local storage, fully managed so teams ship product instead of operating infrastructure, and natively integrated with analytical capabilities you activate when you need them. No re-platforming. No ETL tax. A database that grows with your application.

This article walks through why modern workloads break traditional Postgres, what a purpose-built managed Postgres looks like, and how native ClickHouse integration creates a path from OLTP to real-time analytics without the usual pain.

Key takeaways #

- AI and real-time workloads (vector search, high-frequency telemetry, agent-driven queries) generate I/O patterns that break Postgres's row-store and MVCC model at scale.

- The traditional response of splitting Postgres and a warehouse, bridged with ETL, costs teams thousands of engineering hours and hundreds of thousands of dollars annually.

- Performance: Local NVMe storage with collocated compute delivers up to 10x faster OLTP than network-attached disks, eliminating the primary bottleneck in managed Postgres.

- Developer experience: A fully managed Postgres with automatic HA, backups, and point-in-time recovery lets teams focus on product, not database operations.

- Native analytics: One-click CDC via ClickPipes and the pg_clickhouse extension bring real-time analytical queries into Postgres with no pipelines to maintain and no SQL to rewrite.

- Start simple, scale natively: Begin with high-performance Postgres. When you outgrow transactional limits, activate analytical integration without re-platforming or re-architecting.

Why do AI and real-time workloads break traditional Postgres #

The shape of modern data has changed in ways that stress every assumption Postgres makes about I/O, concurrency, and memory management.

Embedding models like OpenAI's text-embedding-3-large produce vectors with up to 3,072 dimensions. Each vector insert writes a wide row, updates indexes, and generates WAL. AI agents produce "chatty" query patterns with dozens of database calls per user interaction, not the predictable request-response cycle of traditional web applications. OpenAI's own infrastructure for ChatGPT, serving 800 million users, relies on roughly 50 read replicas and has offloaded write-heavy workloads to separate datastores because Postgres alone could not absorb the pressure.

These workloads trigger three specific failure modes.

First, WAL bloat. Under sustained write pressure, Postgres generates write-ahead log faster than replication slots can drain it. Consumers must explicitly acknowledge progress before WAL segments are released, and when they fall behind, the backlog compounds. The pg_wal directory fills the primary's disk. WAL archiving becomes a bottleneck rather than a safety net.

Second, buffer cache thrashing. When analytical scans (dashboards, reports, aggregation queries) run alongside OLTP, they evict hot transactional pages from shared buffers. The result is latency spikes across the board. Your checkout endpoint gets slower because someone ran a monthly revenue report.

Third, autovacuum starvation. High write rates accumulate dead tuples faster than autovacuum can process them. Autovacuum competes for the same disk I/O budget as production queries. When it falls behind, table bloat spirals, index efficiency degrades, and performance deteriorates in ways that are difficult to recover from without downtime.

Adding read replicas does not solve these problems. Replicas can suffer from the WAL apply lag and add to the operational surface area. They do not address primary write bottlenecks, and they do not make analytical queries faster. They move the contention to a different server.

| Dimension | SaaS CRUD | AI / Real-Time Application |

|---|---|---|

| Data shape | Narrow rows, normalized schema | Wide vectors + telemetry events |

| Ingestion rate | Bursty, hundreds of TPS | Continuous, 10K+ events/sec |

| Query pattern | Index lookups, point reads | Mixed scans + vector distance + aggregations |

| I/O profile | Read-heavy, predictable | Write-heavy with analytical scans |

| Primary bottleneck | Connection count | Disk I/O + WAL throughput |

What is the ETL tax and why is it the wrong response #

The instinctive response to these limits is to move analytical data somewhere else. Split the workload: Postgres handles transactions, a warehouse handles analytics, and a pipeline bridges them. This is the ETL Tax: the total cost of maintaining that bridge, including pipeline engineering hours, warehouse compute, data staleness, schema drift incidents, and egress fees.

Companies like Instacart, GitLab, and Ramp have built in-house Postgres-to-analytics replication pipelines. The typical effort requires a 10-person data engineering team working for six months to design, build, test, and productionize the pipeline at scale. That is roughly 10,000 engineering hours before the first analytical query runs in production. Even with managed ETL tools, projects range from $17,500 at startup scale to over $400,000 for enterprise deployments. Hidden costs including egress, staffing, and downtime routinely exceed license fees by 2 to 4x. Companies still report spending up to $750K over four years and 100 hours per integration.

Beyond cost, there is the freshness penalty. Batch ETL means analytics lag by hours or days. For AI applications making real-time decisions (fraud detection, dynamic pricing, RAG quality monitoring), stale data produces wrong answers. And the problem compounds: every new data source adds a pipeline, every schema change requires pipeline maintenance, and every outage requires a different runbook for a different tool.

The ETL Tax is expensive, and architecturally unnecessary if your database stack is designed with analytics in mind from the start. The right answer is not a better ETL tool. It is eliminating the need for ETL altogether.

| Cost Category | What It Looks Like | Typical Cost |

|---|---|---|

| DIY replication build | 10-person team, 6 months | ~10,000 engineering hours |

| Managed ETL tools | Fivetran, Airbyte, custom connectors | $17,500 to $400,000+/yr (hidden costs 2-4x license) |

| Warehouse compute | Snowflake/Redshift credits for staging and transforms | Variable, scales significantly with usage |

| Data staleness | Hours-to-days-old analytics | Wrong decisions for real-time use cases |

| Vendor sprawl | Separate Postgres + warehouse + CDC tool + monitoring | Compounding operational overhead |

What does a modern managed Postgres with NVMe and native analytics look like #

ClickHouse was built on a set of convictions: open source so you are never locked in, cost-effective so performance is not gated by spend, and enterprise-ready so you do not outgrow it. ClickHouse Managed Postgres applies the same philosophy to OLTP. It is not a fork or a proprietary compatibility layer. It is vanilla Postgres, run the way we believe databases should be run.

That belief is organized around three pillars.

Pillar 1: Performance via local NVMe. #

Most managed Postgres offerings run on network-attached block storage. AWS RDS uses EBS. GCP Cloud SQL uses Persistent Disk. Every I/O operation crosses the network. Under load, latency jitter compounds, and for AI workloads with heavy write amplification, this becomes the dominant bottleneck.

ClickHouse Managed Postgres collocates compute and storage on local NVMe drives. The result is microsecond-level disk latency versus milliseconds for network-attached storage. NVMe delivers roughly 300K IOPS compared to 16K for EBS gp3. Independent benchmarks from Ubicloud show 4.6x higher TPC-C throughput and 7.7x lower p99 latency when running Postgres on NVMe versus RDS gp3. On mixed workloads typical of high-throughput web applications and AI-driven services, ClickHouse Managed Postgres benchmarks at 2x to 10x faster than the competition.

What this solves in practice: the disk IOPS bottlenecks that cause ingestion slowdowns, multi-hour VACUUM cycles, tail latency spikes, and WAL throughput limits. These are the exact pain points that surface when Postgres serves AI-driven, high-throughput applications.

Pillar 2: Fully managed operational experience #

The service provides up to two synchronous standbys across availability zones with quorum-based streaming replication. A write is acknowledged when at least one standby confirms. Automatic failover happens with minimal disruption, and HA standbys are dedicated to failover readiness rather than exposed for reads, so promotion is always safe.

Built-in backups use WAL-G with point-in-time recovery and a default seven-day retention window. You can fork from any recovery point for testing, debugging, or backfills without touching production. Read replicas scale read traffic independently from the HA standbys. Enterprise security includes encryption at rest and in transit, private networking, and SSO.

A single console manages both Postgres and ClickHouse. One vendor, one contract. No stitching together DMS, Kinesis, S3, and Redshift, and no managing three separate vendor relationships. You manage your schema and queries. The service manages provisioning, patching, failover, backups, and monitoring.

Pillar 3: Open source, no lock-in #

This is vanilla Postgres with standard extensions and standard tooling. The integration components, pg_clickhouse and PeerDB (which powers ClickPipes CDC), are also open source. You can self-host the entire stack or use ClickHouse Cloud. The exit door is always open.

| Capability | AWS (RDS/Aurora) | GCP (Cloud SQL/AlloyDB) | Databricks + Neon | Snowflake + Crunchy | ClickHouse Managed Postgres |

|---|---|---|---|---|---|

| NVMe Performance | EBS (network-attached) | Persistent Disk / Colossus | Network-attached | TBD | Local NVMe collocated |

| Real-Time Analytics | Separate (Redshift) | AlloyDB HTAP only | Batch-oriented | Batch-oriented | Sub-second via ClickHouse |

| Native CDC to OLAP | Requires DMS / 3rd party | Requires Dataflow / 3rd party | New / immature | New / immature | ClickPipes (battle-tested) |

| Unified Query Layer | No | No | No | No | pg_clickhouse |

| Open Source Stack | Proprietary layers | AlloyDB Omni (partial) | Neon OSS / Databricks platform is proprietary, built on OSS components | Both proprietary | 100% (Postgres + ClickHouse + PeerDB + pg_clickhouse) |

| Enterprise Security | Yes | Yes | Neon maturing | Yes | Yes (HA, PITR, encryption, private networking) |

Note AWS and GCP have deep ecosystem breadth. Neon offers fast developer onboarding. Snowflake leads on governance. This table focuses on architectural capabilities for real-time AI workloads.

How does native Postgres-to-ClickHouse integration eliminate the ETL tax #

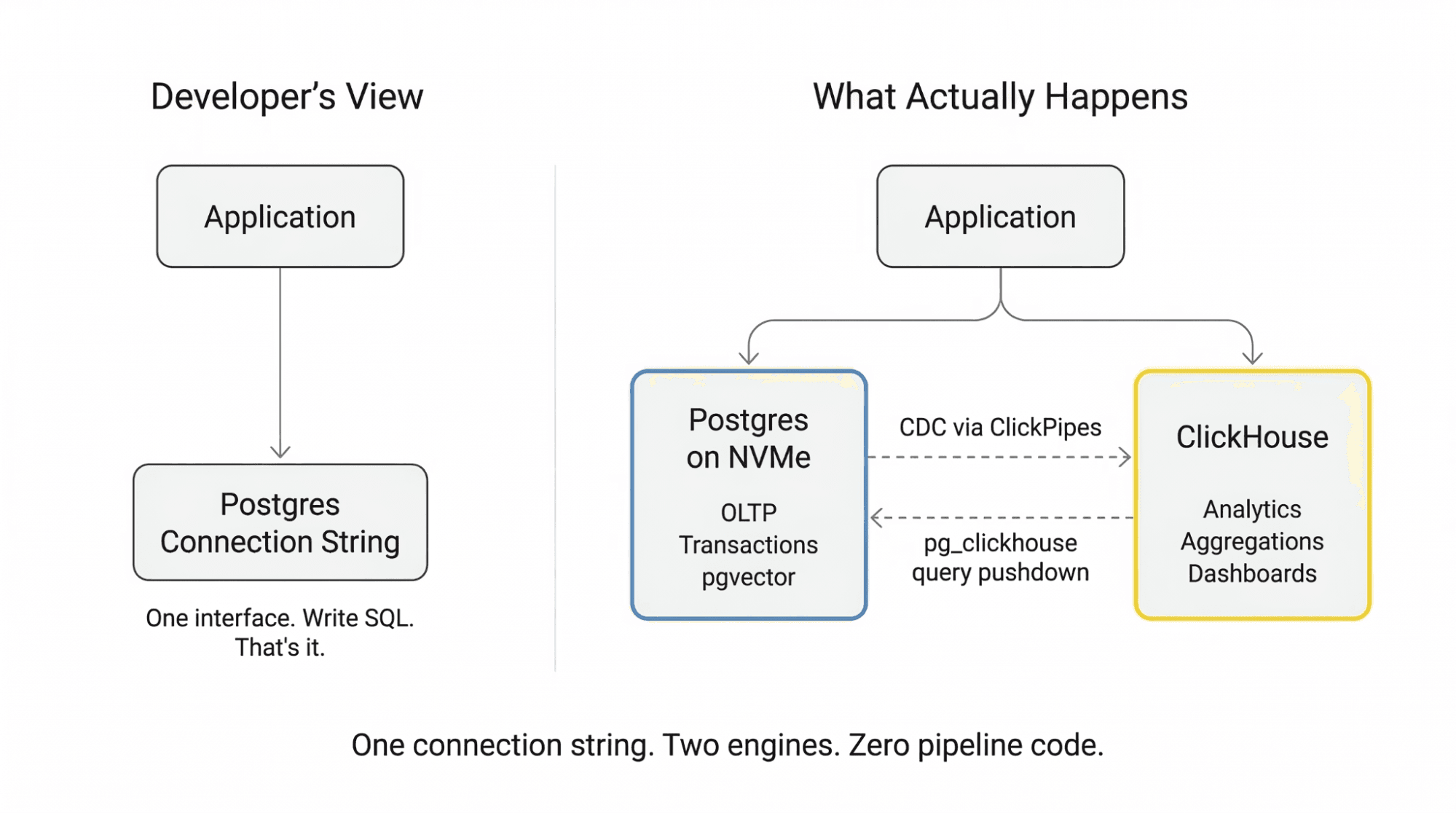

Instead of building ETL pipelines to a separate warehouse, ClickHouse Managed Postgres offers a native path: transactional data flows continuously to ClickHouse via built-in CDC, and you query it transparently from Postgres via the pg_clickhouse extension. One connection string. No pipeline code. No warehouse to manage.

How the data flows #

ClickPipes CDC is powered by PeerDB, an open-source change data capture engine acquired by ClickHouse. It uses Postgres logical decoding to stream committed changes directly to ClickHouse. Initial loads use parallel snapshotting, moving 1TB in roughly two hours rather than the days that serial approaches require. Ongoing replication latency runs as low as 10 seconds with continuous slot consumption, avoiding the reconnection cycles that cause WAL bloat on the Postgres side.

Schema evolution is automatic. ADD COLUMN and DROP COLUMN operations propagate without manual intervention. With PG17 and later, failover replication slots ensure that CDC pipelines survive primary failovers without requiring full resyncs. The slot state propagates to the new primary automatically.

Setup is straightforward: open the ClickHouse Cloud console, navigate to Data Sources, select Postgres CDC, provide connection details, and the pipeline is running. One click, not one quarter.

How queries route #

The pg_clickhouse extension is an open-source Postgres foreign data wrapper (Apache 2.0 licensed) that creates foreign tables pointing to ClickHouse. Queries against these tables are transparently rewritten with full pushdown: filters, GROUP BY, ORDER BY, HAVING, and SEMI JOINs all execute in ClickHouse's columnar engine, not in Postgres. Traditional FDWs pull millions of rows back into Postgres for processing. pg_clickhouse keeps the heavy computation in ClickHouse and returns only the results.

The performance difference is substantial. On TPC-H benchmarks, 14 of 22 queries are fully pushed down, delivering 60x or greater improvements. On representative queries: Q1 aggregations drop from 4,693ms to 268ms (17.5x faster), Q3 joins from 742ms to 111ms (6.7x), and Q6 filter-plus-aggregation queries from 764ms to 53ms (14.4x). pg_clickhouse is the fastest Postgres extension on ClickBench.

For the developer, nothing changes at the application layer. No new connection strings. No SQL rewrites. Existing ORMs, dashboards, and cron jobs continue to work. They simply run orders of magnitude faster on analytical queries.

The observability bonus #

For teams already using ClickHouse for analytics or observability, this integration opens a powerful expansion path. Instrument your application with OpenTelemetry SDKs, collect observability signals into ClickHouse, and correlate them directly with your application data from Postgres. You can see not just that a query is slow, but which users it affects and what they were doing, because operational and observability data live in the same analytical engine. ClickHouse Cloud's compute separation lets you isolate observability workloads into a child service without impacting your production analytics.

| Query | Postgres (ms) | Via pg_clickhouse (ms) | Speedup |

|---|---|---|---|

| Q1 (aggregation) | 4,693 | 268 | 17.5x |

| Q3 (joins) | 742 | 111 | 6.7x |

| Q6 (filters + agg) | 764 | 53 | 14.4x |

How does the stack grow with your application? #

Most database migrations are traumatic because they are re-platforming events. The architecture described here avoids that entirely. You are not migrating from Postgres. You are activating capabilities within the same stack.

Stage 1: Just Postgres #

You start with ClickHouse Managed Postgres. It is vanilla Postgres on NVMe, fast, managed, and open source. Your application does CRUD, vector search with pgvector, maybe some light reporting queries. Everything runs in Postgres. This is fine, and you should stay here as long as it works. Out of the box, you get microsecond disk latency, automatic HA across availability zones, backups with point-in-time recovery, and the ability to fork for testing without touching production.

Stage 2: Analytics are slowing me down. #

You notice that dashboard queries take seconds. Autovacuum competes with production writes. Your data team wants to aggregate months of event data. The p99 on your OLTP queries is creeping upward.

Just enable CDC from the ClickHouse Cloud console and your data starts flowing to ClickHouse, compressed and columnar and ready for analytical queries. Use pg_clickhouse To route slow queries through foreign tables. Analytical queries run 10x to 60x faster. Postgres stops competing with itself. You did not migrate, re-platform, or hire a data engineer to build pipelines.

Stage 3: Full unified stack. #

Your AI application generates hundreds of millions of events. You need sub-100ms dashboards, real-time user analytics, and time-series rollups. ClickHouse handles the analytical load. Postgres handles transactions. CDC keeps them synchronized with sub-10-second freshness. pg_clickhouse gives your application a single query interface.

You add OpenTelemetry instrumentation. Now your observability signals flow into ClickHouse alongside your application data. You can correlate which API endpoints are slow with which customers are affected and what they were doing, all in one query engine. You right-size Postgres (fewer vCPUs, fewer replicas) because ClickHouse handles the analytical load. Single console, single vendor. Total stack cost is lower than a single oversized Postgres plus a warehouse plus ETL would have been.

When is ClickHouse Managed Postgres the right choice #

| Your Situation | Recommendation | Why |

|---|---|---|

| Standard CRUD app, under 100 GB, light analytics | ClickHouse Managed Postgres (Postgres only) | NVMe performance, managed HA, and the option to activate analytics later. No need to over-engineer. |

| AI/real-time app, 100 GB to tens of TB, dashboards + OLTP | Full unified stack (Postgres + ClickHouse) | Activate CDC and pg_clickhouse. Offload analytics. Keep Postgres focused on transactions. |

| Extreme-scale vector-only workload, billions of embeddings | Specialized vector DB alongside Postgres | pgvector handles most production RAG workloads. At extreme scale with single-digit millisecond requirements over billions of vectors, a purpose-built vector engine may be warranted. |

A few common counter-arguments deserve honest treatment.

What if we already run everything on AWS #

If you are not disk-bound and do not need real-time analytics, RDS or Aurora may be fine. But if IOPS ceilings are causing multi-hour VACUUM cycles, p99 latency is spiking under load, or your analytics require stitching together DMS, Kinesis, S3, and Redshift, those are problems that NVMe storage and native CDC solve directly. ClickHouse Cloud runs on AWS (with BYOC available), so this is not a cloud-migration conversation.

What about Databricks and Neon for serverless Postgres #

Neon solves fast provisioning and branching well. But production AI agents need fast, scalable, reliable OLTP under sustained load, not just fast forking. On PostgresBench, ClickHouse Managed Postgres delivers 2x to 3.4x higher TPS than Neon across tested configurations, with up to 4x lower P99 latency at scale. Combined with native CDC via ClickPipes and the pg_clickhouse unified query layer, this creates a tightly integrated OLTP-to-analytics stack that the Databricks and Neon pairing lacks.

Can HTAP databases handle both OLTP and OLAP #

Purpose-built engines outperform general HTAP solutions on complex aggregations by orders of magnitude. ClickHouse executes analytics up to 100x faster than Postgres. The unified stack gives you best-of-breed for both workloads rather than a compromise on each.

Why not just use Postgres for everything #

This works well until analytics compete with transactions for the same I/O budget. The value of this architecture is that you do not have to decide in advance. Start with Postgres. Activate analytics when you hit the wall, without re-platforming.

Why the unified Postgres and ClickHouse stack matters #

The database market is moving from rigid OLTP-versus-OLAP silos toward unified stacks that prioritize immediacy, performance, and simplicity. The question is no longer "which database for which job." It is "which stack lets me start fast and scale without re-platforming."

ClickHouse Managed Postgres is built on the same convictions as ClickHouse itself: open source so you own your data, cost-effective so performance is not gated by spend, enterprise-ready so you do not outgrow it. That philosophy now extends from analytics to the transactional layer.

Start with Managed Postgres. Activate analytics when you need them. Explore pg_clickhouse on GitHub and the ClickPipes Postgres CDC docs.