For years, we have focused on building fast systems. ClickHouse is an example of that focus. Performance is not a feature we add later. It is a core design goal from the start.

We applied a similar approach when building our managed Postgres service. The result is offering one of the fastest managed Postgres services to our customers. Postgres handles transactional workloads, while ClickHouse handles analytical workloads. Together they form a unified data stack enabling a "best-of-breed" foundation SaaS and AI applications.

With that in mind, it felt natural to evaluate it the same way we evaluate ClickHouse: with a public, reproducible benchmark.

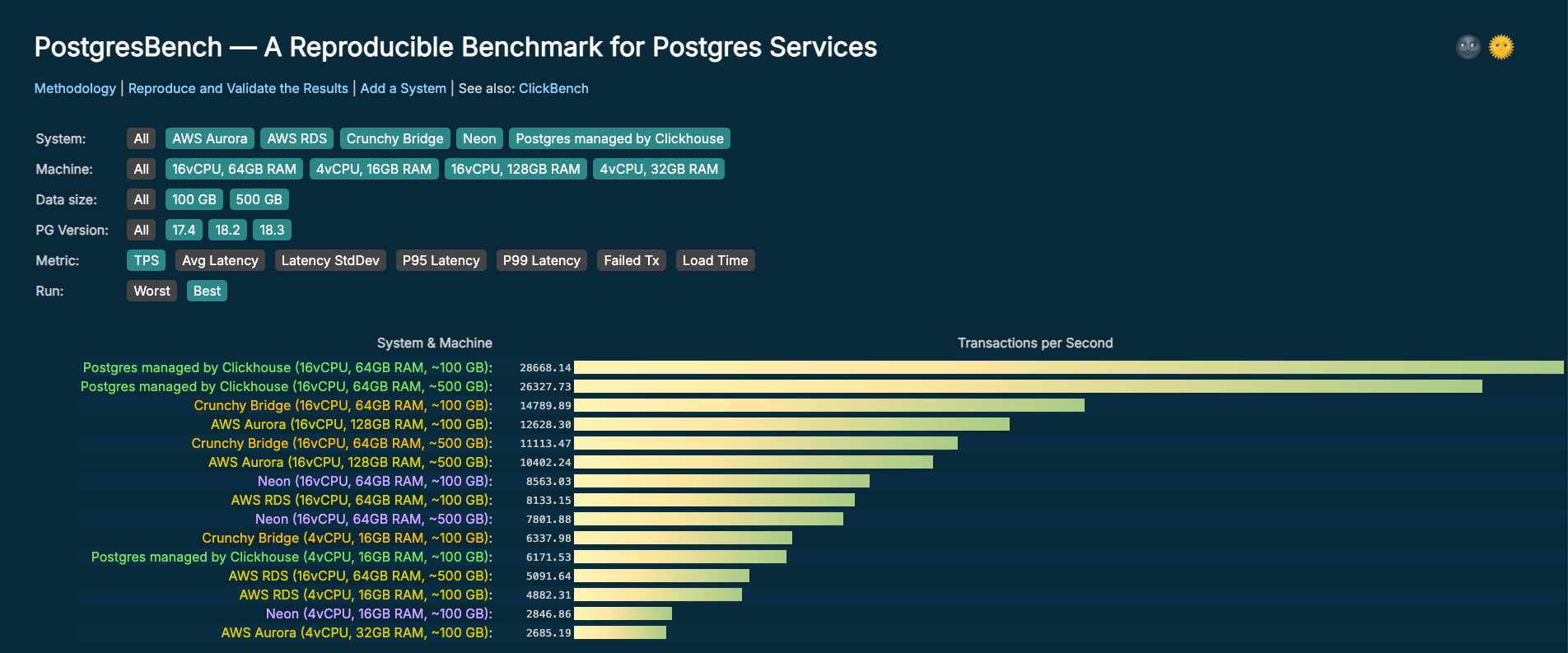

That is why we built PostgresBench, a benchmark to compare managed Postgres services.

From ClickBench to PostgresBench #

ClickBench is a widely referenced OLAP benchmark. It benchmarks more than 40 databases using a transparent and reproducible methodology. All queries, datasets, and results are public and anyone can validate the numbers or submit improvements.

PostgresBench applies the same methodology to transactional Postgres workloads. The rules are straightforward:

- Use a well-understood, standard workload

- Keep infrastructure consistent across all services tested

- Publish all configuration so results can be reproduced

- Allow anyone to submit results or flag issues

If a number looks wrong, it can be checked. If a configuration is unfair, it can be fixed. That is the point.

Benchmark design #

Workload #

PostgresBench is built on pgbench, the standard Postgres benchmarking tool. We use the TPC-B-like workload it includes out of the box, which simulates short concurrent transactions with frequent writes and updates. It is a reasonable proxy for common transactional patterns: payments, order processing, inventory updates, and similar workloads that hit the database hard with small, frequent writes.

We chose pgbench deliberately. Tools like sysbench and Percona TPCC are designed originally for MySQL workloads. For a Postgres benchmark, pgbench feels more natural, and it ships with Postgres, which makes it easy for anyone to reproduce results without additional tooling.

Running parameters #

Each benchmark run uses the following parameters:

pgbench -c 256 -j 16 -T 600 -M prepared -P 30 \

-s $SCALE_FACTOR \

-h $PGHOST -p $PGPORT -U $PGUSER -d $PGDATABASE

We ran each benchmark with 256 clients and 16 threads, which reflects realistic concurrency for a production transactional workload. Each run lasts 10 minutes, long enough to move past warmup and capture stable throughput.

We tested two scale factors: 6849 (~100 GB) and 34247 (~500 GB). These correspond to dataset sizes typical of real Postgres deployments: one where the app is getting started, growing quickly and working set reasonably fits in cache and the other that has achieved reasonable scale, is growing and working set starts spilling to disk The gap between results at these two sizes tells you something useful about how a service handles storage pressure as data grows.

Metrics captured #

We report average TPS, average latency, P95 latency, and P99 latency across all three runs per configuration. We publish the ranking for the best and worst run, and the details of each individual run are available in the repository.

Fairness #

No benchmark is perfectly neutral. Every choice, from instance type to Postgres configuration, can favor one system over another. We explain our thinking behind each decision below, and document the exact settings used for each system in the benchmark repository alongside the results.

Client machine setup #

We provisioned a 16 vCPU, 64 GB instance in the us-east-2 region to run the benchmark client, sized to ensure the client is never the bottleneck. All services were tested in the same region, so results reflect only database performance, not cross-region network latency. We also do not colocate client and database by availability zone, since not all services offer this capability. However, to ensure fairness for those that do, this is something we may consider adding in the future. Contributions are welcome.

Instance selection #

For most services, we targeted a 1:4 CPU-to-RAM ratio and tested two sizes: 4 vCPUs/16GB RAM and 16 vCPUS/64GB RAM. Aurora does not offer an instance class that provides this ratio so we used a 1:8 ratio at two sizes as well: 4 vCPUs/32 GB RAM and 16 vCPUS/128 GB RAM.

We used Graviton instances with NVMe caching for all services that support them, including AWS RDS and Aurora. This gives competitors the same hardware advantage, even though NVMe in those cases is used for caching rather than primary storage.

Single-node #

While all services offer high availability, the underlying architectures differ. Some use standby replication, others use shared or distributed storage layers. Since we are focused on single-node compute and storage performance, we tested without HA enabled to isolate that. We may add HA configurations as a separate dimension in the future.

Default Postgres configuration #

We do not modify default PostgreSQL configurations across services; each system is tested using its out-of-the-box settings. This reflects typical user behavior, where most expect performance without tuning. We may incorporate Postgres configs as an additional dimension in the future.

A note on pricing #

We did not compare pricing. Postgres managed by ClickHouse had not yet been released at the time of testing. We expect pricing to be competitive for similar hardware profiles and you'll be able to extrapolate price-performance from the results here once it is available.

The first cohort #

Systems included #

The first release of PostgresBench covers five services, each tested in two instance configurations: a smaller 4 vCPU / 16 GB setup and a larger 16 vCPU / 64 GB setup, or equivalent. This lets us observe how each service scales with more resources, not just how it performs at a single point.

All services were tested in us-east-2 with HA disabled, using Postgres 17 or 18 depending on what each provider supports. Aurora is the only service in this cohort still on Postgres 17 at time of testing.

| System | T-shirt size | Instance | vCPUs | RAM | Instance storage | Primary storage |

|---|---|---|---|---|---|---|

| Postgres managed by ClickHouse | Small | m8gd.xlarge | 4 | 16 GB | 237 GB - NVMe | NVMe |

| Postgres managed by ClickHouse | Large | m8gd.4xlarge | 16 | 64 GB | 950 GB - NVMe | NVMe |

| AWS Aurora PostgreSQL | Small | db.r6gd.xlarge | 4 | 32 GB | 237 GB - NVMe | Aurora storage |

| AWS Aurora PostgreSQL | Large | db.r6g.4xlarge | 16 | 128 GB | 950 GB - NVMe | Aurora storage |

| AWS RDS for PostgreSQL | Small | db.m8gd.xlarge | 4 | 16 GB | 237 GB - NVMe | 1000 GB - GP3 (16K IOPS) |

| AWS RDS for PostgreSQL | Large | db.m8gd.4xlarge | 16 | 64 GB | 950 GB - NVMe | 1000 GB - GP3 (16K IOPS) |

| Neon | Small | Serverless | 4 | 16 GB | N/A | N/A |

| Neon | Large | Serverless | 16 | 64 GB | N/A | N/A |

| Crunchy Bridge | Small | Standard-16 | 4 | 16 GB | N/A | 6,000 Baseline IOPS / 40,000 Maximum IOPS |

| Crunchy Bridge | Large | Standard-64 | 16 | 64 GB | N/A | 20,000 Baseline IOPS/ 40,000 Maximum IOPS |

Results #

The same script runs the same benchmark on all systems. Each run is done in isolation, no other concurrent processes are running on the machine or the database. Below is a summary table of the results. All results are available on PostgresBench.

Scale factor 6849 (~100 GB)

| Service | T-shirt size | TPS | Avg Latency (ms) | P95 (ms) | P99 (ms) |

|---|---|---|---|---|---|

| Postgres managed by Clickhouse | Small | 6172 | 41.466 | 64.027 | 80.89 |

| Postgres managed by Clickhouse | Large | 28668 | 8.908 | 10.231 | 11.683 |

| AWS Aurora | Small | 2685 | 95.297 | 165.546 | 298.391 |

| AWS Aurora | Large | 12628 | 20.242 | 30.972 | 39.044 |

| AWS RDS | Small | 4882 | 52.419 | 98.005 | 124.198 |

| AWS RDS | Large | 8133 | 31.435 | 72.509 | 97.688 |

| Neon | Small | 2847 | 89.907 | 106.145 | 116.473 |

| Neon | Large | 8563 | 29.832 | 41.423 | 49.213 |

| Crunchy Bridge | Small | 6338 | 40.376 | 66.109 | 85.837 |

| Crunchy Bridge | Large | 14790 | 17.269 | 28.322 | 34.61 |

Scale factor 34247 (~500 GB)

| Service | T-shirt size | TPS | Avg Latency (ms) | P95 (ms) | P99 (ms) |

|---|---|---|---|---|---|

| Postgres managedby Clickhouse | Large | 26328 | 9.703 | 11.402 | 13.197 |

| AWS Aurora | Large | 10402 | 24.581 | 36.178 | 46.493 |

| AWS RDS | Large | 5092 | 50.239 | 88.656 | 117.905 |

| Neon | Large | 7802 | 32.804 | 47.539 | 56.302 |

| Crunchy Bridge | Large | 11113 | 22.996 | 36.402 | 41.683 |