- Adopting AI means not only re-thinking user experiences, but also getting your data strategy right. If you do not, your AI initiatives will fail.

- AI workloads demand high-concurrency, real-time query processing, and full-fidelity data at scale. Legacy batch-oriented architectures weren't built for it.

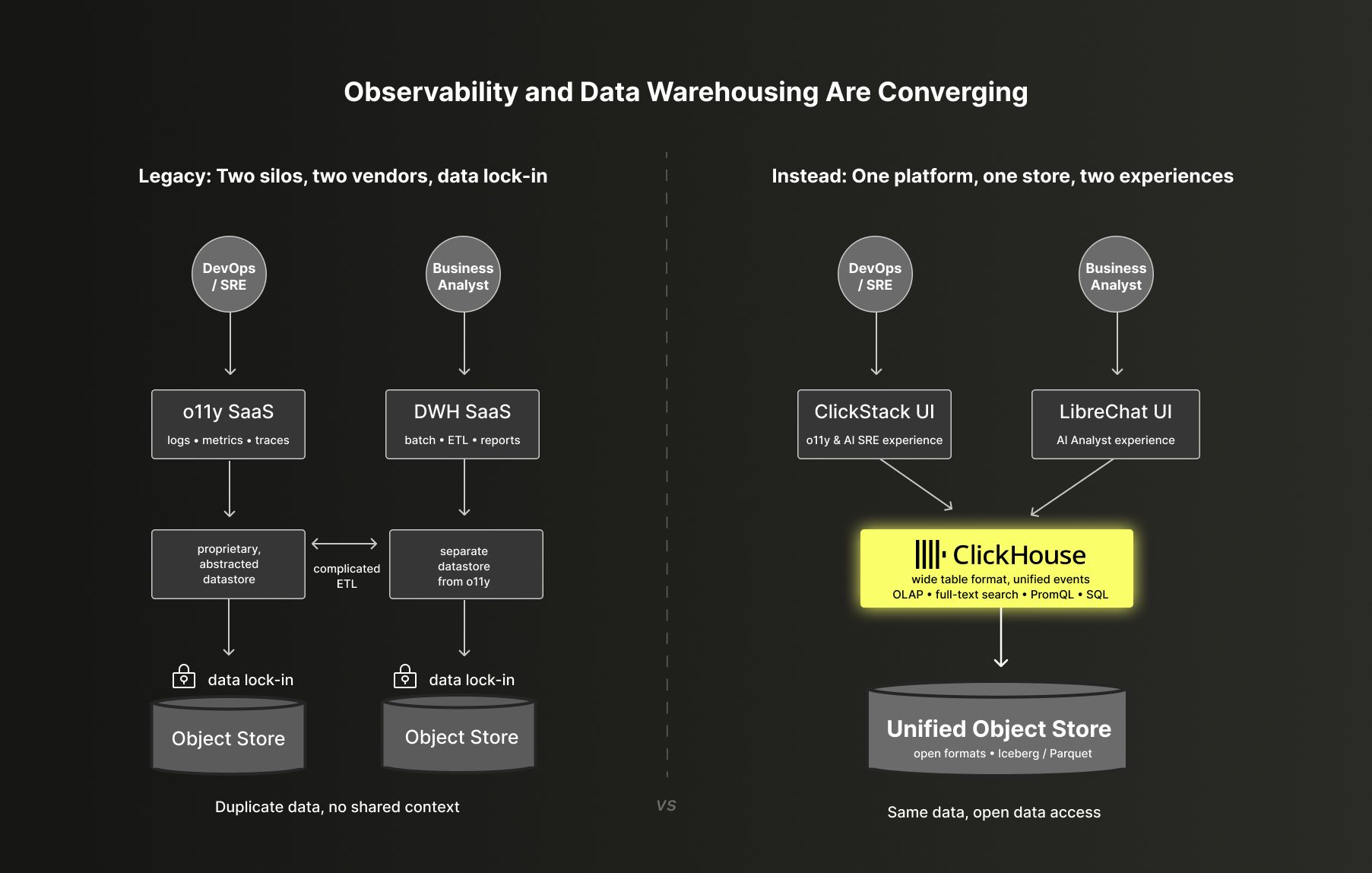

- Previously siloed use cases, such as data warehousing and observability, are converging at the data layer.

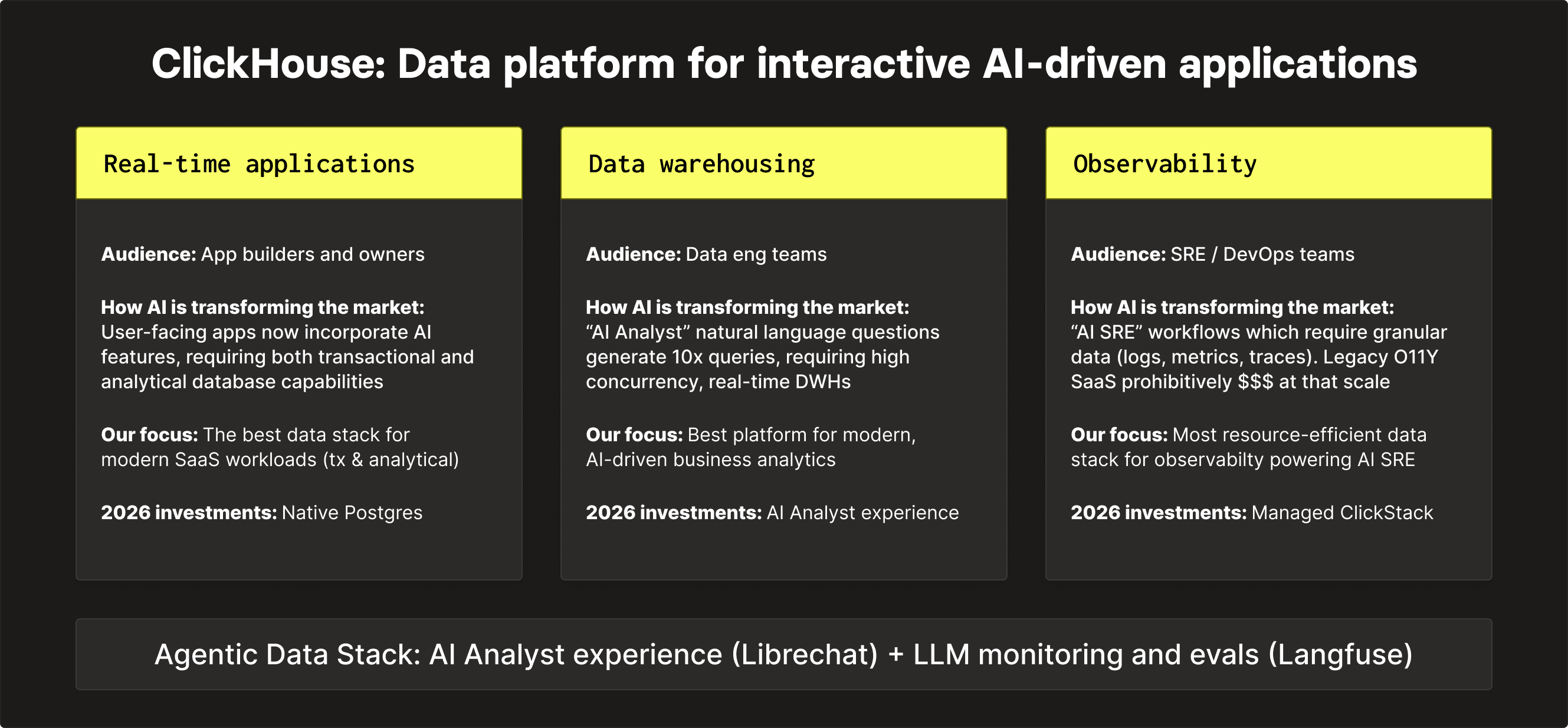

- ClickHouse is evolving into a unified platform for powering AI workloads across AI Apps, AI Analyst, and AI SRE experiences… read on for more.

Summary

AI isn't just another workload on top of your data platform. It fundamentally changes workload expectations across every existing use case.

The three big shifts happening right now are: 1) Applications are becoming agentic, 2) Analytics interfaces are becoming conversational, 3) Observability is shifting from static dashboards to AI-driven investigations. In each case, the underlying data requirements converge around high concurrency, real-time query performance, and full-fidelity data at scale.

These are not requirements that incumbent data platforms were designed for. The data platform choices made over the next few years will shape what's possible: how fast teams can move, what products can be built, what insights are accessible to your business. The question worth asking now is not just whether your current platform handles today's workloads, but whether it's the right foundation for what AI-driven applications actually demand.

In a previous post, I wrote about the unbundling of the cloud data warehouse — how the shift toward interactive, customer-facing applications exposed the architectural mismatch between batch-oriented warehouses and real-time workloads. What I want to describe here is the next wave of that disruption, across three markets: real-time analytics, data warehousing, and observability.

Real-time analytics: The dawn of the "best of breed" database #

Stakeholders: Developers building next-generation user-facing and AI-powered applications

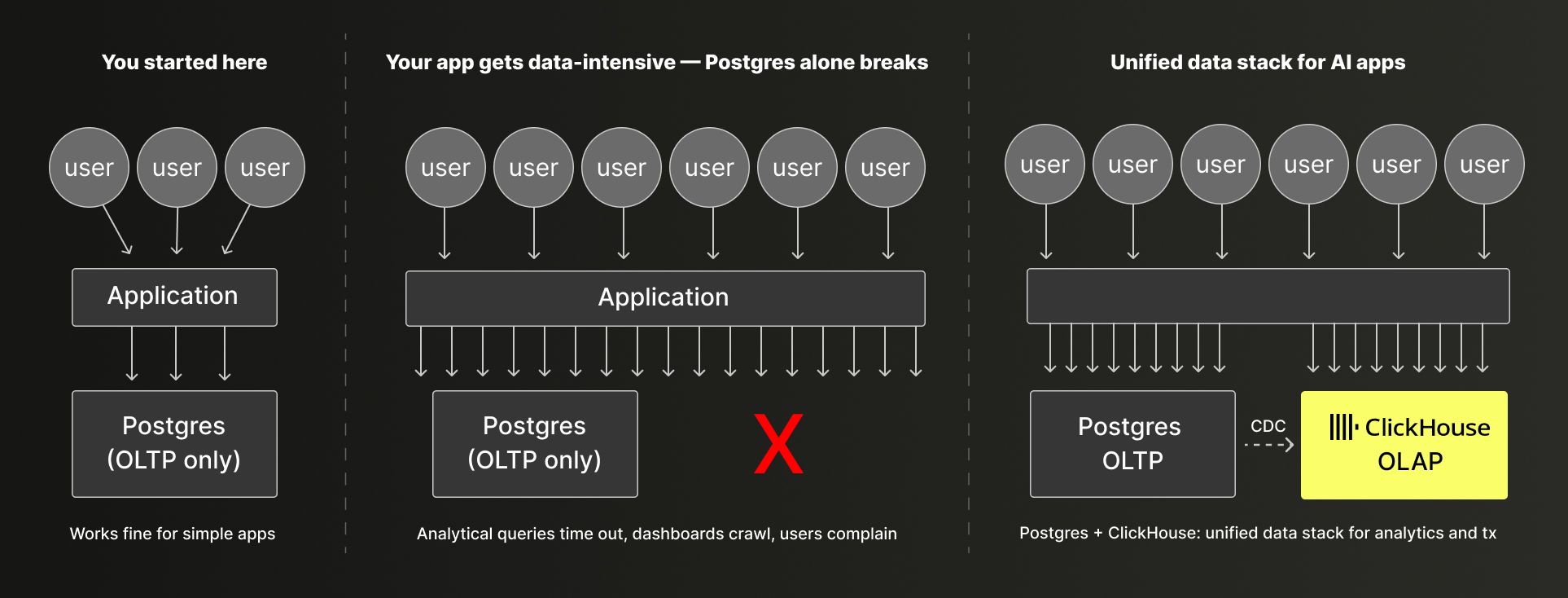

Postgres has become the default database for building modern user-facing applications, because of its superior ability to handle row-oriented transactional data. This worked fine until applications became genuinely data-intensive, driven by real-time dashboards, usage analytics, customer-facing metrics, event streams with millions of rows per second. For these increasingly analytical workloads, Postgres alone stopped scaling. The queries were too slow, the indexes too expensive, the concurrency too low.

The solution the industry landed on was Postgres + ClickHouse: Postgres for transactions and application state, ClickHouse for analytics. This pairing became the de facto modern data stack for any customer-facing application with serious analytical requirements. ClickHouse evolved to be the obvious choice for analytical workloads: fast ingestion, sub-second queries on billions of rows, efficient at the concurrency levels customer-facing applications demand. The data moved from Postgres to ClickHouse via CDC pipelines, and ClickHouse powered everything from embedded product analytics to customer-facing dashboards.

Now AI is accelerating the need for a best-in-class transactional and analytical base for building modern AI applications and agents that power them. LLM-based features including AI-generated insights, anomaly detection, recommendations, and natural language interfaces to product data, require a tighter feedback loop between transactional writes and analytical reads. This is why we are doubling down and building a native Postgres + ClickHouse data stack: a single unified experience where Postgres handles transactional workloads and ClickHouse handles analytics, tightly integrated at the engine level via a native extension – for automatic data replication and management and a unified developer experience.

For platform decision-makers building customer-facing experiences, the trajectory is clear – transactional and analytical capabilities at the datastore layer are required. And tight integration between transactional and analytical capabilities, without losing "best of breed" benefits, is an additional advantage speeding up developer workflow and enabling you to ship new AI-powered capabilities faster.

Data warehousing: AI Analyst workloads break batch-oriented DWH architectures #

Stakeholders: Data engineering teams modernizing data warehousing and business analytics

In the unbundling post, I described how cloud data warehouses like Snowflake were architected for batch ingestion, heavy ETL, and periodic reporting, and how that made them a poor fit for interactive, customer-facing applications.

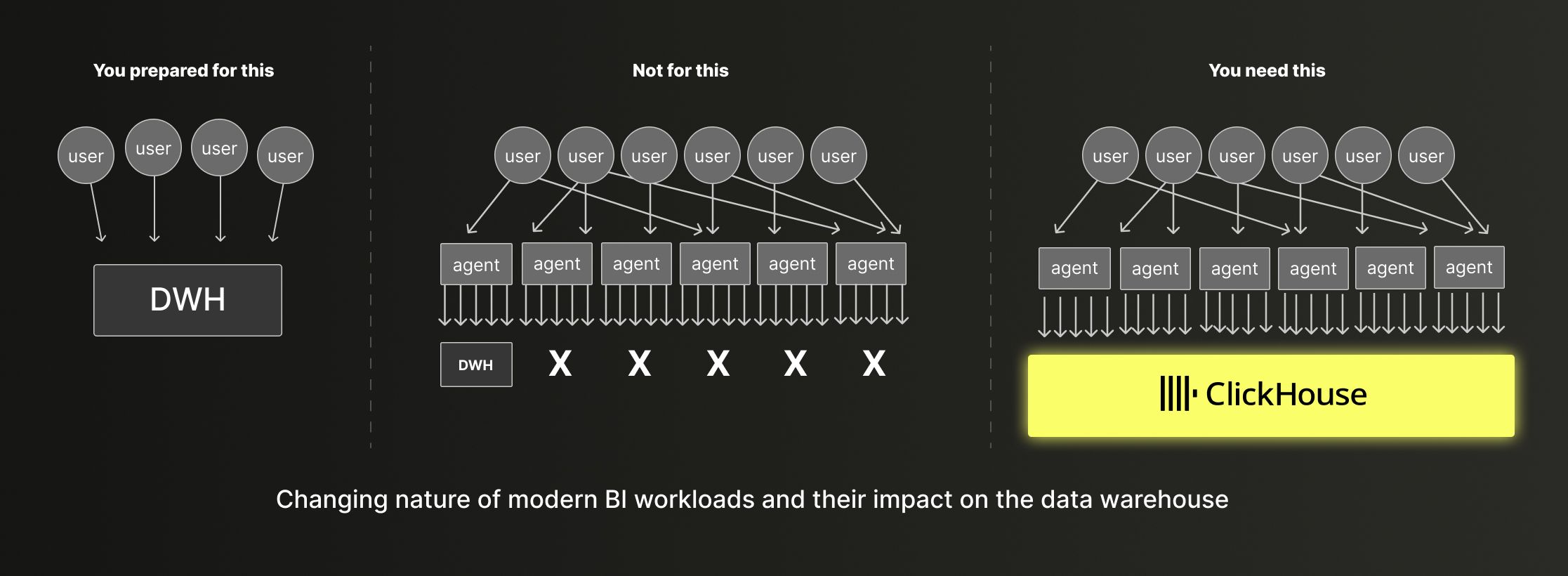

Now the role of traditional data warehouses is being questioned in their "bread-and-butter" use case with the advent of the "AI Analyst" - business analytics starting with natural language prompts deriving downstream assets, including ad-hoc reports and dashboards.

Agent-facing analytics, powered by text-to-SQL tools and natural language analytics interfaces are moving from experimentation to production. The UX implication is obvious: users ask questions in plain English and expect answers in seconds. The infrastructure implication is less obvious but more consequential: each natural language query doesn't just generate one SQL query — it can trigger dozens in rapid succession, as it explores available datasets and reasons through many parallel possibilities. What looks like a single user question becomes a burst of concurrent database queries. As a result, internal analyst-generated workloads start to resemble external customer-facing workloads – high-concurrency, low-latency, interactive responses. The same pattern extends to agents autonomously querying data platforms to find the right data points to base a decision on while solving a problem.

This inverts the assumptions that legacy data warehouse architectures are built around. Platforms like Snowflake and Databricks were designed for ad-hoc, batch-oriented analytics. Their compute models assume queries are infrequent and non-interactive in nature. They optimize for high overall throughput across many queries, not high concurrency and low latency for each query. AI analyst experiences make queries fast and very frequent, and running that workload on a legacy DWH architecture means either unacceptable latency, making the AI assistant feel slow, or costs that scale faster than the value delivered.

ClickHouse was built from the ground up to excel at these requirements: petabyte-scale data, high query concurrency, sub-second response times. It was designed to serve thousands of concurrent users running interactive queries against billions of rows, not occasional analysts running batch reports. It turns out these are exactly the properties the agentic era demands.

For teams making long-term platform bets, the calculus is straightforward: AI-powered business analytics is not a future possibility, it is already here. The platforms that were right for the previous era of batch reporting do not meet its technical requirements. The switching costs of migrating off legacy data warehousing systems are real, but finite. The cost of spending the next five years on the wrong platform, paying a concurrency tax while competitors run interactive AI analytics, is larger.

Observability: AI SRE demands granular data at scale #

Stakeholders: Platform and infrastructure teams owning observability strategy

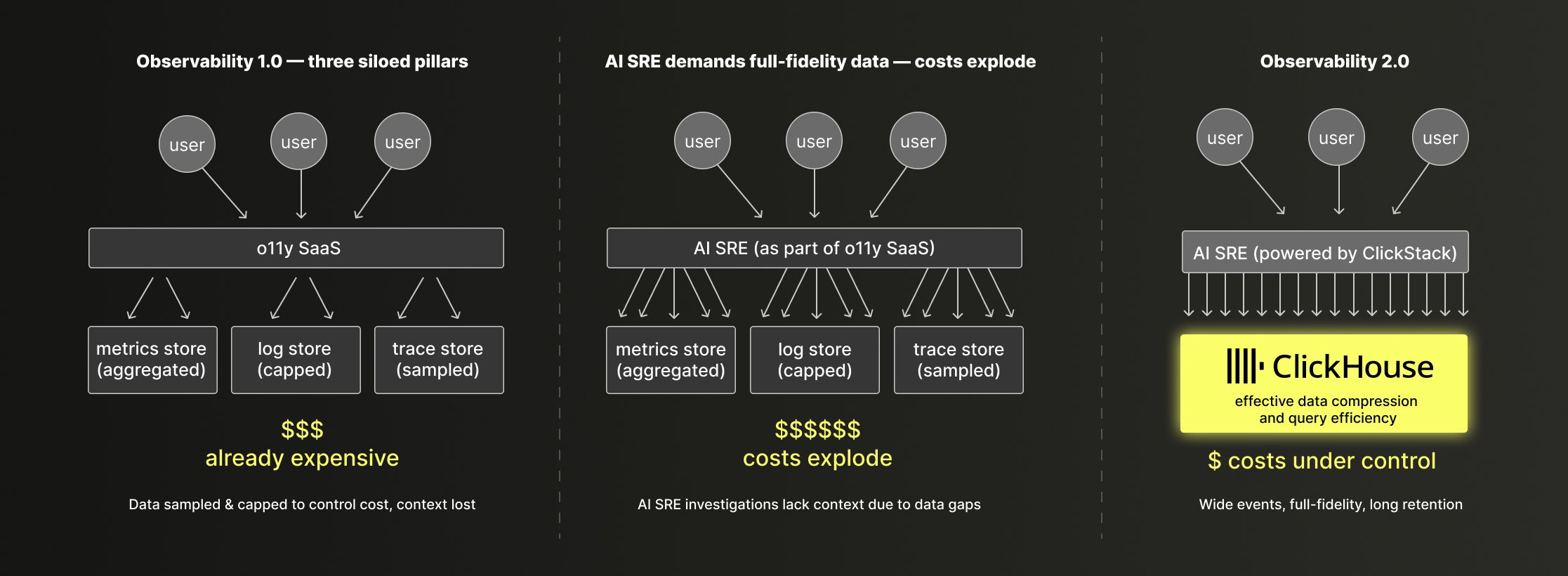

The traditional observability stack is built around three separate pillars (metrics, logs, and traces) each stored in its own specialized system. That architecture made sense when storage and compute were expensive: you pre-aggregated metrics, sampled logs, and kept trace retention short to control costs. The tradeoff was manageable.

AI SRE workflows disrupt this model by introducing two new pressures: a high volume of concurrent natural language queries and a constant need for granular, high-cardinality, long-retention data to drive automated incident triage, root cause analysis and anomaly correlation. Sampled logs and downsampled metrics are not useful for an AI agent trying to correlate an error pattern with a deployment event from three days ago. The more capable you want your AI tooling to be, the more data you need to keep, and the more the cost structure of incumbent platforms works against you.

This is the core shift that Charity Majors has been describing as Observability 2.0: replacing the three-pillar model with a single source of truth based on wide structured events stored in a columnar storage engine. Rather than pre-deciding what questions you'll ask and pre-aggregating for them, you store full-fidelity events and derive metrics, traces, and SLOs from them at query time. Every modern observability company is now built on this model, and many use ClickHouse as the main storage engine.

Traditional observability players like Datadog are facing a real "innovator's dilemma" here. As they are priced on data volumes and significantly rate-limit their platforms, their customers have been trained to ingest less data — sampling logs, downsampling metrics, limiting trace retention — to control costs. Reducing per-GB pricing to enable AI SRE workflows means cannibalizing the revenue model the business is built on. Rebuilding around wide events and columnar storage means abandoning the specialized data structures and pricing mechanics they've scaled for decades.

ClickHouse, on the other hand, is uniquely well-suited here for straightforward reasons: high compression on log and event data, sub-second queries on high-cardinality wide events, efficient at the ingestion volumes that production infrastructure generates, and a cost model based on compute and storage rather than per-GB data ingestion fees.

This is also why we are investing in a turnkey observability stack, ClickStack, observability based on OpenTelemetry and ClickHouse, with an opinionated UI and AI SRE capabilities, available both in open source and as a managed offering.

Observability and DWH are converging: Two markets, one architecture #

Data warehousing and observability have been treated as separate domains for the last decade: separate buyers, separate vendors, separate stacks. And historically, that separation made some technical sense: storage backends, user interface, and consumption patterns. Even the datasets were different, because at first few businesses were fueled by their online presence.

Today, this separation is an outdated convention rather than a technical necessity. On the storage side, virtually all modern data platforms, whether business analytics or observability, now write to object stores. And the compute engines require interactive, low latency queries at high concurrency, as well as support for AI Analyst or AI SRE capabilities.

Finally, in the past, most teams treated observability data as purely operational. But in reality today, API calls are purchases and errors are failed transactions. Instead of looking at the same source of truth, the same events are often being stored twice, on two platforms, by two teams, with a fragile synchronization layer in between.

Teams that reframe all of it as business data, stored once in open formats and queryable by both AI Analyst and AI SRE tooling, eliminate that duplication and unlock context that neither silo had alone.

The platform layer: Agentic analytics and LLM observability #

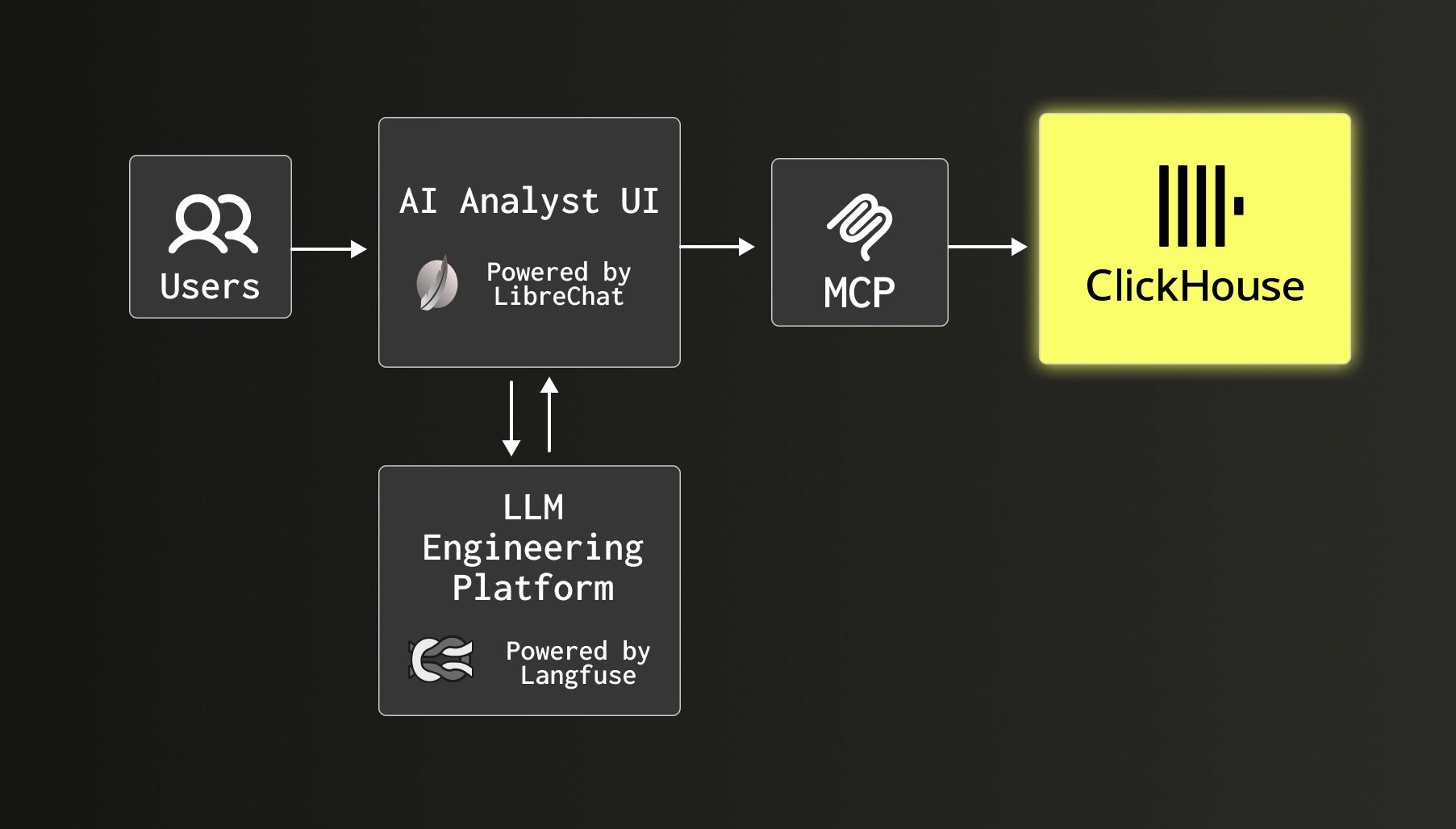

A complete data platform in 2026 is more than just a database. It is a database plus the tools that make it accessible to AI agents and the instrumentation to understand how those agents behave.

Two things are happening simultaneously. First, AI agents are becoming the primary interface to data. Users increasingly don't write SQL, but instead ask questions in natural language, and agents decompose those questions into queries, execute them, and synthesize results. This means a data platform needs to expose its capabilities to agents natively: ready-made UIs, MCP-compatible APIs, agent frameworks that can reach into your data without bespoke integration work for every use case. This is why we acquired LibreChat, the leading open-source AI chat platform, and made it a core component of what we call the Agentic Data Stack. LibreChat combined with ClickHouse gives teams a turnkey way to deploy analytics agents over their data, without building the agent layer from scratch.

Second, as AI agents proliferate, understanding and governing how they behave becomes a first-order engineering problem. LLM observability (tracing agent execution, monitoring model performance, tracking costs, debugging failures across multi-step agentic workflows) is not optional infrastructure. It is the difference between running AI in production with confidence or having it get stuck in the POC/experimentation stage. The observability problem for agentic systems is harder than for traditional software: the execution graphs are dynamic, the inputs and outputs are high-dimensional, and failures are often subtle rather than binary. Langfuse, which runs on ClickHouse Cloud to power real-time LLM observability at scale, is solving this problem.

For platform decision-makers, the takeaway is clear: the database is necessary, but not sufficient. The complete picture includes agent-ready interfaces and LLM observability tooling, natively integrated into the data platform experience.

A unified data platform for interactive AI-driven applications #

ClickHouse is evolving into what we see as the unified data platform for interactive AI-driven applications: one platform that handles transactional and analytical workloads, modern real-time data warehousing and conversational BI, and evolving AI-SRE driven observability workflows, at the performance and cost profile that AI-native applications demand.

The market is moving fast, and the platforms winning the next era are already visible. The question for every team making long-term infrastructure decisions is whether they are making the right bet now, while the switching costs are manageable, or whether they will be making a harder, more expensive decision later.